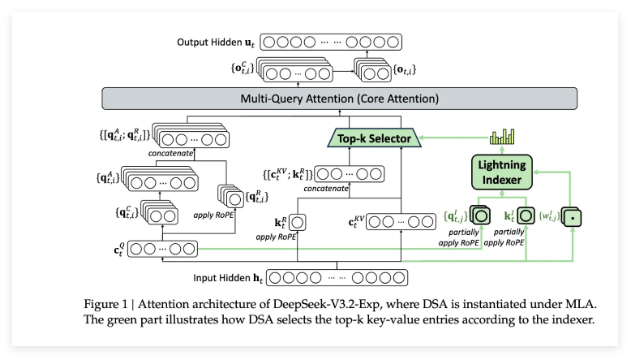

摘要

The landscape of image generation has been forever changed by open vocabulary diffusion models.

However, at their core these models use transformers, which makes generation slow. Better implementations to increase the throughput of these transformers have emerged, but they still evaluate the entire model.

In this paper, we instead speed up diffusion models by exploiting natural redundancy in generated images by merging redundant tokens.

After making some diffusion-specific improvements to Token Merging (ToMe), our ToMe for Stable Diffusion can reduce the number of tokens in an existing Stable Diffusion model by up to 60% while still producing high quality images without any extra training.

In the process, we speed up image generation by up to 2× and reduce memory consumption by up to 5.6×.

Furthermore, this speed-up stacks with effi- cient implementations such as xFormers, mini

读论文--Token Merging for Fast Stable Diffusion(用于快速Diffusion模型的tome技术)

正在检查是否收录...- 本文链接:

- https://wapzz.net/post-6754.html

- 版权声明:本博客所有文章除特别声明外,均默认采用 CC BY-NC-SA 4.0 许可协议。

本站部分内容来源于网络转载,仅供学习交流使用。如涉及版权问题,请及时联系我们,我们将第一时间处理。

文章很赞!支持一下吧

还没有人为TA充电

为TA充电

-

支付宝扫一扫

-

微信扫一扫

感谢支持

文章很赞!支持一下吧